Little has changed in the way Java desktop UI is written since the original Java release. Technologies have changed (AWT, Swing, SWT, etc.), but fundamentals remain the same. The developer must choose which widgets to use, how to lay those widgets out, how to store the data being edited and how to synchronize the model with the UI. Even the best developers fall into traps of having UI components talk directly to other UI components rather than through the model. Inordinate amount of time is spent debugging layout and data-binding issues.

Sapphire aims to raise UI writing to a higher level of abstraction. The core premise is that the basic building block of UI should not be a widget (text box, label, button, etc.), but rather a property editor. Unlike a widget, a property editor analyzes metadata associated with a given property, renders the appropriate widgets to edit that property and wires up data binding. Data is synchronized, validation is passed from the model to the UI, content assistance is made available, etc.

This fundamentally changes the way developers interact with a UI framework. Instead of writing UI by telling the system how to do something, the developer tells the system what they intend to accomplish. When using Sapphire, the developer says "I want to edit LastName property of the person object". When using widget toolkits like SWT, the developer says "create label, create text box, lay them out like so, configure their settings, setup data binding and so on". By the time the developer is done, it is hard to see the original goal in the code that's produced. This results in UI that is inconsistent, brittle and difficult to maintain.

First, The Model

Sapphire includes a simple modeling framework that is tuned to the needs of the Sapphire UI framework and is designed to be easy to learn. It is also optimized for iterative development. A Sapphire model is defined by writing Java interfaces and using annotations to attach metadata. An annotation processor that is part of Sapphire SDK then generates the implementation classes. Sapphire leverages Eclipse Java compiler to provide quick and transparent code generation that runs in the background while you work on the model. The generated classes are treated as build artifacts and are not source controlled. In fact, you will rarely have any reason to look at them. All model authoring and consumption happens through the interfaces.

In this article we will walk through a Sapphire sample called EzBug. The sample is based around a scenario of building a bug reporting system. Let's start by looking at IBugReport.

@GenerateXmlBinding

public interface IBugReport extends IModelElementForXml, IRemovable

{

ModelElementType TYPE = new ModelElementType( IBugReport.class );

// *** CustomerId ***

@XmlBinding( path = "customer" )

@Label( standard = "customer ID" )

ValueProperty PROP_CUSTOMER_ID = new ValueProperty( TYPE, "CustomerId" );

Value<String> getCustomerId();

void setCustomerId( String value );

// *** Title ***

@XmlBinding( path = "title" )

@Label( standard = "title" )

@NonNullValue

ValueProperty PROP_TITLE = new ValueProperty( TYPE, "Title" );

Value<String> getTitle();

void setTitle( String value );

// *** Details ***

@XmlBinding( path = "details" )

@Label( standard = "details" )

@LongString

@NonNullValue

ValueProperty PROP_DETAILS = new ValueProperty( TYPE, "Details" );

Value<String> getDetails();

void setDetails( String value );

// *** ProductVersion ***

@Type( base = ProductVersion.class )

@XmlBinding( path = "version" )

@Label( standard = "version" )

@DefaultValue( "2.5" )

ValueProperty PROP_PRODUCT_VERSION = new ValueProperty( TYPE, "ProductVersion" );

Value<ProductVersion> getProductVersion();

void setProductVersion( String value );

void setProductVersion( ProductVersion value );

// *** ProductStage ***

@Type( base = ProductStage.class )

@XmlBinding( path = "stage" )

@Label( standard = "stage" )

@DefaultValue( "final" )

ValueProperty PROP_PRODUCT_STAGE = new ValueProperty( TYPE, "ProductStage" );

Value<ProductStage> getProductStage();

void setProductStage( String value );

void setProductStage( ProductStage value );

// *** Hardware ***

@Type( base = IHardwareItem.class )

@ListPropertyXmlBinding( mappings = { @ListPropertyXmlBindingMapping( element = "hardware-item", type = IHardwareItem.class ) } )

@Label( standard = "hardware" )

ListProperty PROP_HARDWARE = new ListProperty( TYPE, "Hardware" );

ModelElementList<IHardwareItem> getHardware();

}As you can see in the above code listing, a model element definition in Sapphire is composed of a series of blocks. These blocks define properties of the model element. Each property block has a PROP_* field that declares the property, the metadata in the form of annotations and the accessor methods. All metadata about the model element is stored in the interface. There are no external files. When this interface is compiled, Java persists these annotation in the .class file and Sapphire is able to read them at runtime.

Sapphire has three types of properties: value, element and list. Value properties hold simple data, such as strings, integers, enums, etc. Any object that is immutable and can be serialized to a string can be stored in a value property. An element property holds a reference to another model element. You can specify whether this nested model element should always exist or if it should be possible to create and delete it. A list property holds zero or more model elements. A list can be homogeneous (only holds one type of elements) or heterogeneous (holds elements of various specified types).

Using a combination of list and element properties, it is possible to create an arbitrary model hierarchy. In the above listing, there is one list property. It is homogeneous and references IHardwareItem element type. Let's look at that type next.

@GenerateXmlBinding

public interface IHardwareItem extends IModelElementForXml, IRemovable

{

ModelElementType TYPE = new ModelElementType( IHardwareItem.class );

// *** Type ***

@Type( base = HardwareType.class )

@XmlBinding( path = "type" )

@Label( standard = "type" )

@NonNullValue

ValueProperty PROP_TYPE = new ValueProperty( TYPE, "Type" );

Value<HardwareType> getType();

void setType( String value );

void setType( HardwareType value );

// *** Make ***

@XmlBinding( path = "make" )

@Label( standard = "make" )

@NonNullValue

ValueProperty PROP_MAKE = new ValueProperty( TYPE, "Make" );

Value<String> getMake();

void setMake( String value );

// *** ItemModel ***

@XmlBinding( path = "model" )

@Label( standard = "model" )

ValueProperty PROP_ITEM_MODEL = new ValueProperty( TYPE, "ItemModel" );

Value<String> getItemModel();

void setItemModel( String value );

// *** Description ***

@XmlBinding( path = "description" )

@Label( standard = "description" )

@LongString

ValueProperty PROP_DESCRIPTION = new ValueProperty( TYPE, "Description" );

Value<String> getDescription();

void setDescription( String value );

}The IHardwareItem listing should look very similar to IBugReport and that's the point. A Sapphire model is just a collection of Java interfaces that are annotated in a certain way and reference each other.

A bug report is contained in IFileBugReportOp, which serves as the top level type in the model.

@GenerateXmlBindingModelImpl

@RootXmlBinding( elementName = "report" )

public interface IFileBugReportOp extends IModelForXml, IExecutableModelElement

{

ModelElementType TYPE = new ModelElementType( IFileBugReportOp.class );

// *** BugReport ***

@Type( base = IBugReport.class )

@Label( standard = "bug report" )

@XmlBinding( path = "bug" )

ElementProperty PROP_BUG_REPORT = new ElementProperty( TYPE, "BugReport" );

IBugReport getBugReport();

IBugReport getBugReport( boolean createIfNecessary );

}Let's now look at the last bit of code that goes with this model, which is the enums.

@Label( standard = "type", full = "hardware type" )

public enum HardwareType

{

@Label( standard = "CPU" )

CPU,

@Label( standard = "main board" )

@EnumSerialization( primary = "Main Board" )

MAIN_BOARD,

@Label( standard = "RAM" )

RAM,

@Label( standard = "video controller" )

@EnumSerialization( primary = "Video Controller" )

VIDEO_CONTROLLER,

@Label( standard = "storage" )

@EnumSerialization( primary = "Storage" )

STORAGE,

@Label( standard = "other" )

@EnumSerialization( primary = "Other" )

OTHER

}

@Label( standard = "product stage" )

public enum ProductStage

{

@Label( standard = "alpha" )

ALPHA,

@Label( standard = "beta" )

BETA,

@Label( standard = "final" )

FINAL

}

@Label( standard = "product version" )

public enum ProductVersion

{

@Label( standard = "1.0" )

@EnumSerialization( primary = "1.0" )

V_1_0,

@Label( standard = "1.5" )

@EnumSerialization( primary = "1.5" )

V_1_5,

@Label( standard = "1.6" )

@EnumSerialization( primary = "1.6" )

V_1_6,

@Label( standard = "2.0" )

@EnumSerialization( primary = "2.0" )

V_2_0,

@Label( standard = "2.3" )

@EnumSerialization( primary = "2.3" )

V_2_3,

@Label( standard = "2.4" )

@EnumSerialization( primary = "2.4" )

V_2_4,

@Label( standard = "2.5" )

@EnumSerialization( primary = "2.5" )

V_2_5

}You can use any enum as a type for a Sapphire value property. Here, once again, you see Sapphire pattern of using Java annotations to attach metadata to model particles. In this case the annotations are specifying how Sapphire should present enum items to the user and how these items should be serialized to string form.

Then, The UI

The bulk of the work in writing UI using Sapphire is modeling the data that you want to present to the user. Once the model is done, defining the UI is simply a matter of arranging the properties on the screen. This is done via an XML file.

<definition>

<import>

<bundle>org.eclipse.sapphire.samples</bundle>

<package>org.eclipse.sapphire.samples.ezbug</package>

</import>

<composite>

<id>bug.report</id>

<content>

<property-editor>CustomerId</property-editor>

<property-editor>Title</property-editor>

<property-editor>

<property>Details</property>

<hint>

<name>expand.vertically</name>

<value>true</value>

</hint>

</property-editor>

<property-editor>ProductVersion</property-editor>

<property-editor>ProductStage</property-editor>

<property-editor>

<property>Hardware</property>

<child-property>

<name>Type</name>

</child-property>

<child-property>

<name>Make</name>

</child-property>

<child-property>

<name>ItemModel</name>

</child-property>

</property-editor>

<composite>

<indent>true</indent>

<content>

<separator>

<label>Details</label>

</separator>

<switching-panel>

<list-selection-controller>

<property>Hardware</property>

</list-selection-controller>

<panel>

<key>IHardwareItem</key>

<content>

<property-editor>

<property>Description</property>

<hint>

<name>show.label.above</name>

<value>true</value>

</hint>

<hint>

<name>height</name>

<value>5</value>

</hint>

</property-editor>

</content>

</panel>

<default-panel>

<content>

<label>Select a hardware item above to view or edit additional parameters.</label>

</content>

</default-panel>

</switching-panel>

</content>

</composite>

</content>

<hint>

<name>expand.vertically</name>

<value>true</value>

</hint>

<hint>

<name>width</name>

<value>600</value>

</hint>

<hint>

<name>height</name>

<value>500</value>

</hint>

</composite>

<dialog>

<id>bug.report.dialog</id>

<label>Create Bug Report (Sapphire Sample)</label>

<initial-focus>Title</initial-focus>

<content>

<composite-ref>

<id>bug.report</id>

</composite-ref>

</content>

<hint>

<name>expand.vertically</name>

<value>true</value>

</hint>

</dialog>

</definition>A Sapphire UI definition is a hierarchy of parts. At the lowest level we have the property editor and a few other basic parts like separators. These are aggregated together into various kinds of composities until the entire part hierarchy is defined. Some hinting here and there to guide the UI renderer and the UI definition is complete. Note the top-level composite and dialog elements. These are parts that you can re-use to build more complex UI definitions or reference externally from Java code.

Next we will write a little bit of Java code to open the dialog that we defined.

final IFileBugReportOp op = new FileBugReportOp( new ModelStoreForXml( new ByteArrayModelStore() ) );

final IBugReport report = op.getBugReport( true );

final SapphireDialog dialog

= new SapphireDialog( shell, report, "org.eclipse.sapphire.samples/sdef/EzBug.sdef!bug.report.dialog" );

if( dialog.open() == Dialog.OK )

{

// Do something. User input is found in the bug report model.

}Pretty simple, right? We create the model and then use the provided SapphireDialog class to instantiate the UI by referencing the model instance and the UI definition. The pseudo-URI that's used to reference the UI definition is simply bundle id, followed by the path within that bundle to the file holding the UI definition, followed by the id of the definition to use.

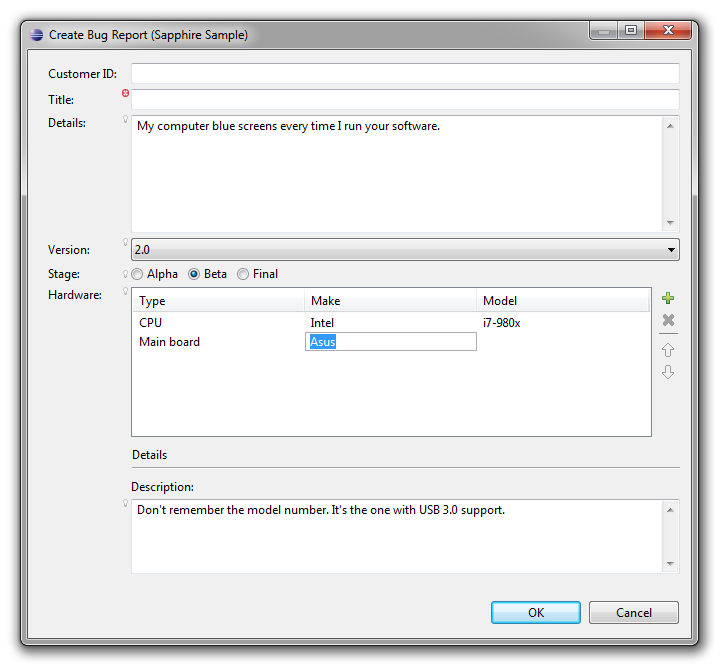

Let's run it and see what we get...

There you have it. Professional rich UI backed by your model with none of the fuss of configuring widgets, trying to get layouts to do what you need them to do or debugging data binding issues.

One Step Further

A dialog is nice, but really a wizard would be better suited for filing a bug report. Can Sapphire do that? Sure. Let's first go back to the model. A wizard is a UI pattern for configuring and then executing an operation. Our model is not really an operation yet. We can create and populate a bug report, but then we don't know what to do with it.

Any Sapphire model element can be turned into an operation by adding an execute method. We will do that now with IFileBugReportOp. In particular, IFileBugReportOp will be changed to also extend IExecutableModelElement and will acquire the following method definition:

// *** Method: execute ***

@DelegateImplementation( FileBugReportOpMethods.class )

IStatus execute( IProgressMonitor monitor );Note how the execute method is specified. We don't want to modify the generated code to implement it, so we use delegation instead. The @DelegateImplementation annotation can be used to delegate any method on a model element to an implementation located in another class. The Sapphire annotation processor will do the necessary hookup.

public class FileBugReportOpMethods

{

public static final IStatus execute( IFileBugReportOp context, IProgressMonitor monitor )

{

// Do something here.

return Status.OK_STATUS;

}

}The delegate method implementation must match the method being delegated with two changes: (a) it must be static, and (b) it must take the model element as the first parameter.

Now that we have completed the bug reporting operation, we can return to the UI definition file and add the following:

<wizard>

<id>wizard</id>

<label>Create Bug Report (Sapphire Sample)</label>

<page>

<id>main.page</id>

<label>Create Bug Report</label>

<description>Create and submit a bug report.</description>

<initial-focus>Title</initial-focus>

<content>

<with>

<property>BugReport</property>

<content>

<composite-ref>

<id>bug.report</id>

</composite-ref>

</content>

</with>

</content>

<hint>

<name>expand.vertically</name>

<value>true</value>

</hint>

</page>

</wizard>The above defines a one page wizard by re-using the composite definition created earlier. Now back to Java to use the wizard...

final IFileBugReportOp op = new FileBugReportOp( new ModelStoreForXml( new ByteArrayModelStore() ) );

op.getBugReport( true ); // Force creation of the bug report.

final SapphireWizard<IFileBugReportOp> wizard

= new SapphireWizard<IFileBugReportOp>( op, "org.eclipse.sapphire.samples/sdef/EzBug.sdef!wizard" );

final WizardDialog dialog = new WizardDialog( shell, wizard );

dialog.open();SapphireWizard will invoke the operation's execute method when the wizard is finished. That means we don't have to act based on the result of the open call. The execute method will have completed by the time the open method returns to the caller.

The above code pattern works well if you are launching the wizard from a custom action, but if you need to contribute a wizard to an extension point, you can extend SapphireWizard to give your wizard a zero-argument constructor that creates the operation and references the correct UI definition.

Let's run it...

One More Step

Now that we have a system for submitting bug reports, it would be nice to have a way to maintain a collection of these reports. Even better if we can re-use some of our existing code to do this. Back to the model.

The first step is to create IBugDatabase type which will hold a collection of bug reports. By now you should have a pretty good idea of what that will look like.

@GenerateXmlBindingModelImpl

@RootXmlBinding( elementName = "bug-database" )

public interface IBugDatabase extends IModelForXml

{

ModelElementType TYPE = new ModelElementType( IBugDatabase.class );

// *** BugReports ***

@Type( base = IBugReport.class )

@Label( standard = "bug report" )

@ListPropertyXmlBinding( mappings = { @ListPropertyXmlBindingMapping( element = "bug", type = IBugReport.class ) } )

ListProperty PROP_BUG_REPORTS = new ListProperty( TYPE, "BugReports" );

ModelElementList<IBugReport> getBugReports();

}That was easy. Now let's go back to the UI definition file.

Sapphire simplifies creation of multi-page editors. It also has very good integration with WTP XML editor that makes it easy to create the very typical two-page editor with a form-based page and a linked source page showing the underlying XML. The linkage is fully bi-directional.

To create an editor, we start by defining the structure of the pages that will be rendered by Sapphire. Sapphire currently only supports one editor page layout, but it is a very flexible layout that works for a lot scenarios. You get a tree outline of content on the left and a series of sections on the right that change depending on the selection in the outline.

<editor-page>

<id>editor.page</id>

<page-header-text>Bug Database (Sapphire Sample)</page-header-text>

<initial-selection>Bug Reports</initial-selection>

<root-node>

<node>

<label>Bug Reports</label>

<section>

<description>Use this editor to manage your bug database.</description>

<content>

<action-link>

<action-id>node:add</action-id>

<label>Add a bug report</label>

</action-link>

</content>

</section>

<node-list>

<property>BugReports</property>

<node-template>

<dynamic-label>

<property>Title</property>

<null-value-label><bug></null-value-label>

</dynamic-label>

<section>

<label>Bug Report</label>

<content>

<composite-ref>

<id>bug.report</id>

</composite-ref>

</content>

</section>

</node-template>

</node-list>

</node>

</root-node>

</editor-page>You can see that the definition centers around the outline. The definition traverses the model as the outline is defined with sections attached to various nodes acquiring the context model element from their node. The outline can nest arbitrarily deep and you can even define recursive structures by externalizing node definitions, assigning ids to them and then referencing those definitions similarly to how this sample references an existing composite definition.

The next step is to create the actual editor. Sapphire includes several editor classes for you to choose from. In this article we will use the editor class that's specialized for the case where you are editing an XML file and you want to have an editor page rendered by Sapphire along with an XML source page.

public final class BugDatabaseEditor extends SapphireEditorForXml

{

public BugDatabaseEditor()

{

super( "org.eclipse.sapphire.samples" );

setEditorDefinitionPath( "org.eclipse.sapphire.samples/sdef/EzBug.sdef/editor.page" );

}

@Override

protected IModel createModel( final ModelStore modelStore )

{

return new BugDatabase( (ModelStoreForXml) modelStore );

}

}Finally, we need to register the editor. There are a variety of options for how to do this, but covering all of these options is outside the scope of this article. For simplicity we will register the editor as the default choice for files named "bugs.xml".

<extension point="org.eclipse.ui.editors">

<editor

class="org.eclipse.sapphire.samples.ezbug.ui.BugDatabaseEditor"

default="true"

filenames="bugs.xml"

id="org.eclipse.sapphire.samples.ezbug.ui.BugDatabaseEditor"

name="Bug Database Editor (Sapphire Sample)"/>

</extension>That's it. We are done creating the editor. After launching Eclipse and creating a bug.xml file, you should see an editor that looks like this:

Sapphire really shines in complex cases like this where form UI is sitting on top a source file that users might edit by hand. In the above screen capture, what happened is that the user manually entered "BETA2" for the product stage in the source view. There is a problem marker next to the property editor and the yellow assistance popup is accessible by clicking on that marker. The problem message is displayed along with additional information about the property and available actions. The "Show in source" action, for instance, will immediately jump to the editor's source page and highlight the text region associated with this property. This is very valuable when you must deal with large files. These facilities and many others are available out of the box with Sapphire with no extra effort from the developer.

Conclusion

Now that you've been introduced to what Sapphire can do, compare it to how you are currently writing UI code. All of the code presented in this article can be written by a developer with just a few weeks of Sapphire experience in an hour or two. How long would it take you to create something comparable using your current method of choice?

I hope that this article has piqued your interest in Sapphire. Oracle is committed to bringing this technology to the open source community. We have proposed a project at the Eclipse Foundation. If you are interested, you should post a message on the project's forum. Introduce yourself and describe your interest. We are actively seeking both consumers of this technologies as well as potential partners to come join the effort and help us take this technology in the directions that we have not yet anticipated.